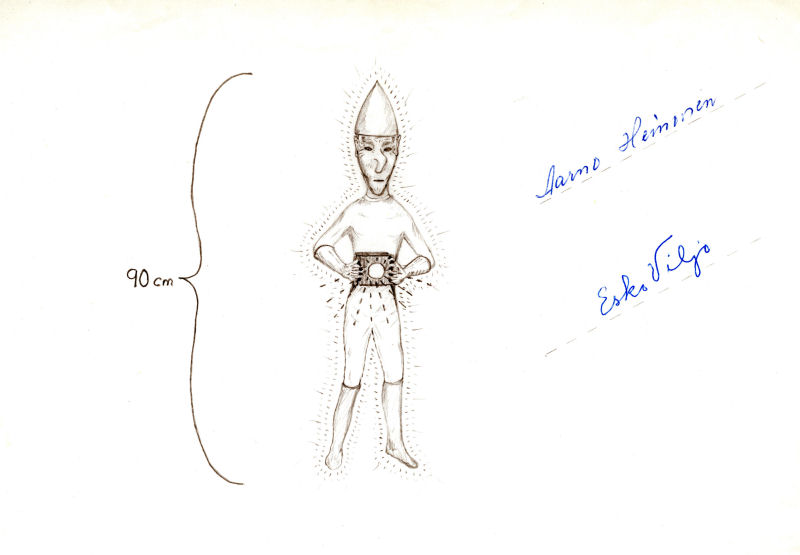

As my professional corrupting-the-youth wound down, I had been inclined to essay a few thoughts on the Ultra/Cryptoterrestrial-as-ecological-ideal, but, given the recent hysteria around and about Artificial Intelligence (AI), General (AGI) and otherwise, and now the latest tempest-in-the-ufological-teapot, David Grusch’s “revelations” about “The Program”, its baker’s dozen of captured nonhuman vehicles and some of their pilots (…), it seems more urgent to address, again, the concept of intelligence with regards both to artificial “intelligence” and speculations about extraterrestrial, nonhuman intelligences, since a perverse notion of “intelligence” determines both.

Today’s concerns over AI and AGI are an illuminating index of an idea of “intelligence” at work in society-at-large (which society? which groups within it, exactly?…). There is strong warrant for maintaining that AI and AGI are not in any concrete, robust sense “intelligent” at all; while, in the same breath, one cannot merely brush aside the very real, operative (if however poorly-reflected and unconscious) understanding of “intelligence” guiding AI/AGI research and development and the no-less real and admittedly-concernful implications this technology holds for the future, near and far.

Geoffrey Hinton, who recently left Google’s AI labs is, at the moment, the loudest voice in this room, and a good example. Consider the following, from recent conversations Hinton conducted with The Guardian. First, from the article linked above:

At the core of his concern was the fact that the new machines were much better – and faster – learners than humans. “Back propagation may be a much better learning algorithm than what we’ve got. That’s scary … We have digital computers that can learn more things more quickly and they can instantly teach it to each other. It’s like if people in the room could instantly transfer into my head what they have in theirs.”

And, from another, recent article:

“You need to imagine something more intelligent than us by the same difference that we’re more intelligent than a frog. And it’s going to learn from the web, it’s going to have read every single book that’s ever been written on how to manipulate people, and also seen it in practice.”

Notice the too-easy conflation of information technology and the human brain (where’s the human being?). The new machines are better and faster at learning than humans, because the machines operate according to “a much better learning algorithm than we’ve got.” (I pass over the egregiously mistaken belief that the internet contains “every single book that’s ever been written on how to manipulate people” and that an AI can “see” (understand) such manipulation “in practice”…). Can a machine, strictly (i.e., in the sense we apply this word to a human being or an another organism, for that matter), be said “to learn”, and does the human brain or being learn in an algorithmically-governed way?

Hinton’s thinking comes out of his research background. A cognitive psychologist and computer scientist by training, he “for the last 50 years,” as he says, has “been trying to make computer models that can learn stuff a bit like the way the brain learns it, in order to understand better how the brain is learning things.” As with too many contemporaries, Hinton’s thinking drifts from trying to model the workings of the brain via computer to conceiving the brain as a computer: “A ‘biological intelligence’ such as ours, he says, has advantages. It runs at low power, ‘just 30 watts, even when you’re thinking‘.” What results is a too-easy, default equating of machine and brain (let alone mind). This not very deeply or rigorously reflected identification by virtue of which the brain is conceived as a machine by the same token warrants speaking of machines as if they were brains or minds, learning, discerning, or otherwise displaying “intelligence” if not self-awareness. No computer “remembers” or, by extension, “learns”, or “recognizes” or “thinks”: such expressions are all anthropomorphic shorthand, grasped at by cyberneticists out of necessity (lacking a vocabulary specific to the functioning of the calculations of what Victorians termed a “difference engine”) that have since gone on to bestow an illusion of humanity on the nonhuman, let alone nonliving. Much of the anxiety about AGI finds its source in linguistic confusion.

One could say more: that “intelligence” evolved (…); that it is embodied (that it’s not just a property or faculty of a brain); that it is ecological (it is relation between an organism and its environment, the driver for its evolution); and, at least in Homo Sapiens, social or cultural (articulated in symbolic systems, such as natural language). For these (and other) reasons, Large Language Models, such as ChatGPT, do not write, speak, or mean, as the software does not exist in a world the way human beings do, a situation which grounds intention and reference, in a word, meaning. ChatGPT is an example of what theorists Julia Kristeva and Roland Barthes termed “intertext”, “the already written”, precisely the sample ChatGPT statistically analyzes for syntactical patterns to reproduce….

So, if AIs are not, strictly, “intelligent,” what, then, of those technologically-advanced aliens imagined by the ufologically-inclined who adhere to the Extraterrestrial Hypothesis (ETH) regarding the origin of UFOs or that more sobre concept at work in the Search for Extraterrestrial Intelligence (SETI)? What’s at work in both is undoubtedly complex. In one regard, there is the supposition that, where conditions are right (as they were on earth), natural processes produce life, life evolves awareness/consciousness/sentience and intelligence, and intelligence produces technology, which advances in sophistication and power. However, we do not know how or which natural processes produce life, nor the material/biological bases for consciousness. And, as readers of the Skunkworks will know, that conjunction of intelligence and technology is itself not unproblematic.

Indeed, the very conjunction of the two is obscure. At times, it seems technology is the sign or symptom or, logically, the sufficient condition of/for intelligence (as in SETI’s search for technosignatures, whether signals or traces of industrial processes in an exoplanet’s atmosphere or the dimming of a star caused by a Dyson Sphere); in the same breath, intelligence (of a certain order) is thought to imply the development of technology. Thus, intelligence and technology are assumed to be mutually implicated. However, the “intelligence” that invents what we now experience as technology (which is distinct from mere tool use) is not general intelligence (let alone “consciousness”) but instrumental reason, its teleological and calculative use. More profoundly, technology-as-we-know-it in this industrial/post-industrial sense is more the product of social, material processes than some ideal/mental/spiritual March of Progress. As such, it is profoundly historical, in a manner even more contingent and aleatoric than that process of evolution that supposedly gave rise to consciousness and intelligence. Indeed, the more closely the concrete history of the advent of today’s “advanced societies” is scrutinized the more complex and contingent the relation between “intelligence” and “technology” becomes. The search for extraterrestrial, technological civilizations comes to appear as little more than the laughable and futile search for some exoplanetary version not even of “ourselves” but of what has been termed “the First World” (or some science-fictional projection thereof).

Both the ETH and SETI are skewed by their identifying “intelligence” and “technology” with the example of a small minority of one species on one planet, an identification which serves more to reinforce and reproduce a certain social formation of that species that to illuminate not only life on other worlds but life on this one. Indeed, the more animal and even plant “intelligence” is researched, freed from ontotheological, anthropocentric blinders, the more intelligence is seen to transcend mere instrumental reason. In this regard, philosopher Justin E Smith-Ruiu’s “Against Intelligence” makes a lively, eloquent case for a radical expansion of the concept of intelligence, which I summarize, here:

…the only idea we are in fact able to conjure of what intelligent beings elsewhere may be like is one that we extrapolate directly from our idea of our own intelligence. And what’s worse, in this case the scientists are generally no more sophisticated than the folk….

One obstacle to opening up our idea of what might count as intelligence to beings or systems that do not or cannot “pass our tests” is that, with this criterion abandoned, intelligence very quickly comes to look troublingly similar to adaptation, which in turn always seems to threaten tautology. That is, an intelligent arrangement of things would seem simply to be the one that best facilitates the continued existence of the thing in question; so, whatever exists is intelligent….

it may in fact be useful to construe intelligence in just this way: every existing life-form is equally intelligent, because equally well-adapted to the challenges the world throws its way. This sounds audacious, but the only other possible construal of intelligence I can see is the one that makes it out to be “similarity to us”…

Ubiquitous living systems on Earth, that is —plants, fungi, bacteria, and of course animals—, manifest essentially the same capacities of adaptation, of interweaving themselves into the natural environment in order to facilitate their continued existence, that in ourselves we are prepared to recognize as intelligence….

There is in sum no good reason to think that evolutionary “progress” must involve the production of artifices, whether in external tools or in representational art. In fact such productions might just as easily be seen as compensations for a given life form’s inadequacies in facing challenges its environment throws at it. An evolutionally “advanced” life form might well be the one that, being so well adapted, or so well blended into its environment, simply has no need of technology at all.

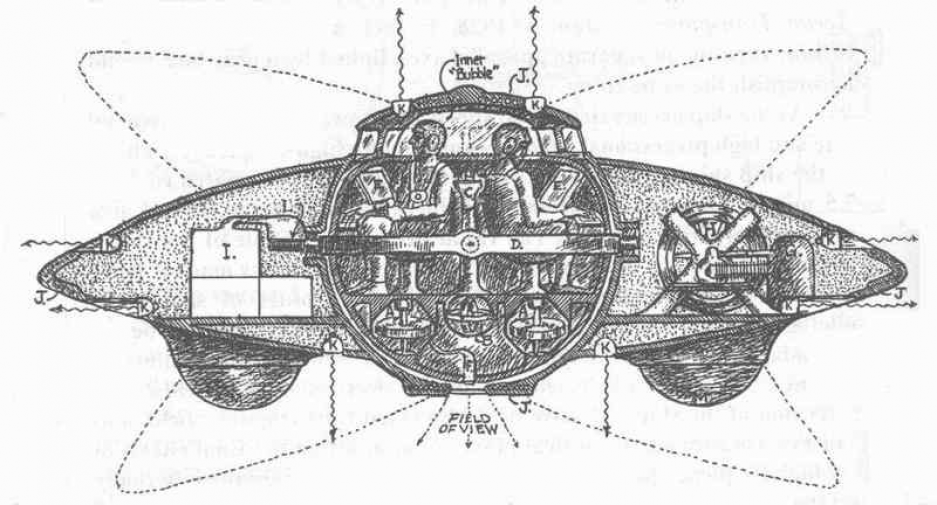

But such a life form will also be one that has no inclination to display its ability to ace our block-stacking tests or whatever other proxies of intelligence we strain to devise. Such life forms are, I contend, all around us, all the time. Once we convince ourselves this is the situation here on Earth, moreover, the presumption that our first encounter with non-terrestrial life forms will be an encounter with spaceship-steering technologists comes to appear as a risible caricature.

In this light, there is such “thing” as intelligence (just as, as Fichte argued so long ago, there is no such “thing” as consciousness). “Intelligence” is a concept, a construct, variously articulated and put to various uses throughout its history. Thus, the concept calls for vigilant scrutiny whenever and wherever it is deployed to wrest it of its apparent, reflexive naturalness and reveal its limits and more importantly the purposes to which it is being put. Indeed, how better to enable ourselves to be able to recognize a truly Other instance of “intelligence” than freeing the concept from the human-all-too-human version that masquerades as intelligence-itself?

Excursus

The problematic broached here is one that demands an entire research program. With regards to “intelligence”, what is demanded is a “destruktive” history of the concept that would desediment and dissolve its apparent univocity and naturalness. At the very least, such a study would splinter that apparent unity into the various ways the concept has been developed and debated in the psychological literature.

In terms of the conjunction of intelligence and technology in SETI, at least one dilemma presents itself. Is the conjuction “Platonic” (let alone ideological) or it possible to argue for a real possibility of an extraterrestrial, technologically-advanced civilization on purely statistical, probabilistic grounds? (My intuition is that even this latter argument, however valid, is motivated by a certain, covert Platonism….).